Artificial intelligence is a kind of modern alchemy. It promises to put the spark of humanity into inanimate objects. It says it can transmute dross into gold - taking heaps of formless data and magically creating new insights from it.

Of course that is hype, and we know it. We’ve all seen the most lauded and high-profile AI project, IBM’s Watson, descend into irrelevance and public incompetence.

The Watson question-answering system won the TV show Jeopardy in 2011, and the world was its oyster. It was about to solve all the world’s problems including drought, hunger, cancer, and how to find good music online.

But that cancer project, with the US Memorial Sloan Kettering institute, didn’t go well. After seven years, it emerged that the system had been giving unsafe advice. In their defense, the institute said the advice was only hypothetical as they never trusted Watson with real patients.

The awful advert where Watson is more incoherent than Bob Dylan - now seems prescient. These days, the only people who still have time to talk to Watson are data center maintenance people.

Evil thoughts

But what if this were a deliberate ploy, to distract us from the real project, when AI actually shows its true evil colors? That's a tad fantastical, but there's definitely been a dark side to AI developments in the big cloud players, ironically including the company that used to say it wouldn’t be evil.

Google’s AI project found itself unable to handle the simple task making AI not be evil - in the sense of actually not being fundamentally racist. Star ethical AI researcher Timnit Gebru pointed to the problem that large AI systems would have an inherent bias - specifically racist and sexist bias - and could be deceptive. Rather than face the problem, Google sacked her, and a colleague Margaret Mitchell, who founded Google’s ethical AI work.

So far, Google’s efforts to relaunch its ethical AI work sound self-serving and unconvincing.

If that happened at Google, no one should be surprised what happened when Facebook. In 2016, the company was caught red-handed, using AI to boost right wing political programs for money, and shared data with Cambridge Analytica for further nefarious purposes.

The company pulled a face-saving PR move, and set up a responsible AI program with a cute name (Society and AI Lab or SAIL), supposedly to rein in this kind of activity. Years later, it’s emerged that AI systems on Facebook helped fuel genocide against Rohingya Muslims in Myanmar, and are still feeding the social cesspit of anti-vaxxers, Holocaust deniers and conspiracists.

The reason is clear enough, however much Facebook's paid defenders wring their hands about it in public. Facebook makes money from engagement, and hate and lies deliver just that. Getting rid of hate and lies on Facebook would be bad for business, so the platform promotes the most extreme content.

“When you’re in the business of maximizing engagement, you’re not interested in truth. You’re not interested in harm, divisiveness, conspiracy. In fact, those are your friends,” Hany Farid, a professor at the University of California, Berkeley who collaborates with Facebook told Karen Hao for an MIT Technology Review article.

It’s deeply ironic. Facebook wants to say it’s doing something, but if its AI systems actually manage to weed out lies, they get shot because they reduce traffic. On the other hand, according to Hao, when then-President Trump railed against supposed anti-conservative bias on the platform, Mark Zuckerberg personally increased the AI team’s budget and tasked them with placating the Right to head off the threat of social media regulation.

Automated dumbness

All of this is a set of examples of the fundamental problem: AI isn’t so much a source of alchemy, as a genie, that can grant wishes - but it answers the question you asked, not the one you thought you did.

“Artificial Intelligence Will Do What We Ask. That’s a Problem," the title of an article by Natalie Wolchover in Quanta Magazine, expresses this well.

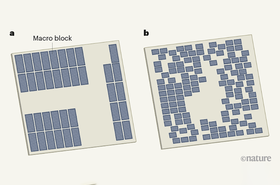

If we can ask the question clearly, and preferably make it into a game, along the lines of Go or Chess. As Google did recently with chip design, then AI can get results. If there’s any nuance, prepare to be disappointed.

That’s not an exaggeration. When the Covid-19 pandemic struck, there were high hopes that AI would help with diagnosis and treatment. Many projects were launched, and Michael Roberts, from the Department of Applied Mathematics and Theoretical Physics, University of Cambridge, Cambridge, UK recently looked at the results.

Of 300 papers on diagnosing Covid, not a single one was any use at all, Roberts found. Some had crashing errors, like the team which hoped AI could find hidden patterns in the chest X-rays from Covid patients. The system was trained on a set of X-rays of adults with Covid and children without. It was useless at spotting Covid - but could reliably identify a child.

There are plenty of other problems, including secretive researchers who won’t share their algorithms, which means their limitations aren’t spotted, to results which can’t be reproduced, and claims that can’t be tested.

And even if good results can be produced, all too often they emerge into an environment - like ethical research at Facebook and Google - which is programmed to reject them.

We welcome any genuine results from AI research, but there are days when we survey the hype, and despair.