Inspur, the largest server vendor in China, is launching a set of Open Compute Project-compliant hyperscale rack servers, bringing established AI products designed for Chinese web players to the global market.

The new range includes an AI server deployed in Baidu's autonomous car project, developed to the Chinese Scorpio/ODCC guidelines and now made available in an OCP format. This represents cross-fertilization between the world's two leading open source hardware initiatives, Inspur vice president of data center and cloud, Dolly Wu, told DCD. Other new products include three server modules and a higher-density storage unit, with the announcements made to coincide with the OCP regional summit in Amsterdam.

Scorpio rising

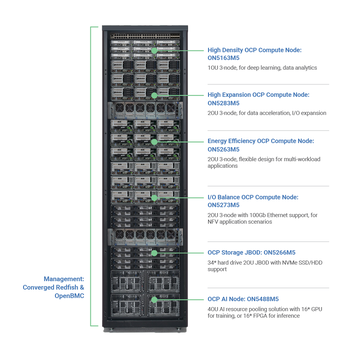

The AI node (ON5488M5) accommodates up to 16 GPUs such as Nvidia's Tesla, in a 4OU form factor (the OU or Open Unit is 48mm in height, as opposed to 44.45 mm in the standard Rack Unit). This is the highest density AI node with the most powerful computing performance available for AI training scenarios, Wu said - and Inspur offers a version with its own FPGAs for AI inference work.

While this is new to the OCP world, a version of the server has been deployed for five years in China. Baidu commissioned a unit for its autonomous car project, which Inspur built to the hardware specifications of the ODCC/Scorpio project, a Chinese open source hardware initiative similar to OCP, but involving regional giants such as Baidu, Tencent and Alibaba.

"We designed this 4OU, 16 GPU box five years ago, for the Baidu autonomous driving project," Wu said. "It has been proven and has been massively deployed for five years." One of the server's major features is the ability to pool GPUs, to increase utilization of the resource, which can be used intermittently for training passes, she explained.

The three new compute nodes are built on Inspur's San Jose Motherboard — the first OCP-accepted Intel Xeon SP motherboard. Compute node 1 (ON5163M5) is a two-socket, 1OU node designed for search engine acceleration, deep learning inference and analytics applications. Compute node 2 (ON5283M5) is a two-socket, 2OU box designed for data acceleration, I/O expansion, transaction processing and image search application scenarios. It can also support different kinds of half-height and half-length external cards. Compute node 3 (ON5273M5), a two-socket, 2OU unit, is intended for NFV applications, with a wide range of half-height and half-length external cards and support for 100Gb Ethernet.

There is also a JBOD storage unit (ON5266M5), which fits 34 hard drives, in a 2OU module that Wu claimed offers "the highest density storage expansion box". It can be used as an expansion module for compute nodes or as a storage pool for the entire rack.

"This is 13 percent more storage than current modules," Wu said. "It is difficult to fit so much into such a small form-factor - remember, the 34 drives can be 14TB or 16TB."

Inspur is also offering open source management for its hardware, converging OpenBMC and Redfish. This could be very important she said: "The reason for the low adoption rate of OCP is the lack of management software. Not every one has the resources of Facebook. We want to make it easy for people to adopt this platform."

Inspur-ational?

Inspur is the third largest server vendor in the world, and the largest in China, but deals mostly with the giant web players like Alibaba, Tencent and Baidu.

"We are the only server vendor that participates in all six of the open source data center initiatives," Wu said, before pausing and helping DCD to enumerate these as: Facebook's OCP, LinkedIn's Open19, IBM's OpenPower, Intel's Rack Scale Design, Microsoft's Project Olympus and the Scorpio/ODCC.

Inspur plans to blur the boundaries between these initiatives - and the release of an ODCC server design into the OCP world is part of this effort, she said: "One of the missions of Inspur is to create synergy between all the platforms and use common building blocks across all of them, to make a truly open community."

OCP has lagged behind ODCC/Scorpio in the implementation of AI, she said, because Facebook has shown little interest in using AI until now. "ODCC is designed for wide industry sectors, while OCP has been mainly used by Facebook, and their consumption of AI is not that high at this point," she explained. "Now, they want to analyze behavior to target adverts based on activity."