When it comes to data processing and storage capabilities, today’s businesses never seem to have enough. Companies, to satiate their need, are intermixing services from traditional in-house data centers, commercial colocation facilities, and cloud providers.

These hybrid environments complicate the IT environment to be sure, but companies insist they are necessary to get the job done. But security experts are starting to wonder if the combination and the complexity might weaken an already vulnerable infrastructure.

Mixing it up

A mixture of technologies can create weaknesses, and in “dynamic” data centers, IT has to run to keep up with new dangers as the infrastructure changes, according to a survey by Dave Shackleford, SANS analyst, instructor, course author, and founder of Voodoo Security.

Shackleford surveyed 430 IT professionals experienced in defending data centers, cloud service operations, or some combination thereof. He then published his findings in the SANS paper The State of Dynamic Data Center and Cloud Security in the Modern Enterprise.

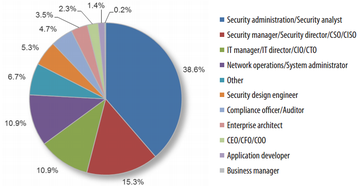

Shackleford’s first step was to categorized the survey respondents according to their job titles, what industry their employer is servicing, and the size of the organization. Slide 1 captures the job diversity of the respondents.

As to the industries represented: government as an industry is the largest group with 18 percent, followed by banking and finance at 14 percent. Shackleford writes, “A mix of other industries including manufacturing, healthcare, consulting, and telecommunications responded to the survey, lending credence to the reality that all types of industries are using or planning to use dynamic data centers.”

When it comes to company size, security professionals from enterprise to small businesses were represented: 23 percent of the respondents worked for organizations with 15,000 or more employees, 64 percent worked for organizations with 1,000 or more employees, 23 percent worked for organizations with 100 to 1,000 employees, and 13 percent of the survey participants worked for organizations having fewer than 100 employees.

Hybrid environments

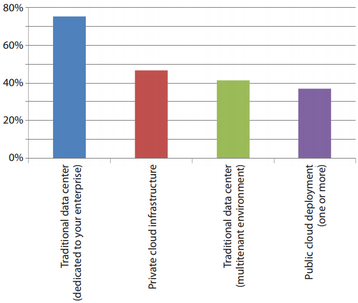

For the survey to have any significance, Shackleford needed survey responses from professionals who worked in what he calls a “dynamic data center” environment. A scalable facility that uses automation and virtualization to meet the demand for IT resources provided by private and public clouds, SaaS, mobile and terrestrial networks, and other sources. The following slide shows the breakdown of different infrastructure types respondents’ organizations are currently using.

Shackleford writes, “Organizations have been steadily moving away from a single type of data center deployment for quite some time; both private and public cloud services and technologies have become more integrated into complex application and server architecture designs.”

How well is security working?

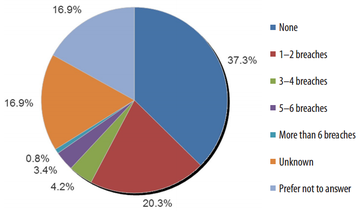

Slide 3 indicates that over 28 percent of the organizations willing to share information knew they had been breached. Large proportions (17 percent each) either didn’t know or wouldn’t say.

Working through the numbers, Shackleford found something unexpected. “Simply experiencing an attack or incident doesn’t mean sensitive data was accessed or stolen,” mentions Shackleford. “Unfortunately, of those able to share their breach experiences, many reported having sensitive data accessed by the attackers in at least one attack.”

Something else interesting, industries with the highest attack percentages — education, government (non-defense), and IT — have relatively low breach percentages: 36 percent of all attacks turned into breaches for the education and IT sectors, and seven percent for government entities.

“On the other hand, the banking and finance sector account for only eight percent of all attacks,” writes Shackleford. “Yet, 60 percent of those attacks converted to breaches. This raises the question of whether preparedness against attack/compromise may be different from preparing for a breach once a compromise has occurred.”

Key findings

The survey continues drilling deeper into the type of cloud deployments employed, the security technologies in place, whether encryption is used, and what defenders consider to be their biggest challenges. Putting it all together, Shackleford came up with several key findings:

- 68 percent are concerned with access management and privileged account management vulnerabilities in both their data center and cloud service operations.

- 64 percent are concerned with application vulnerabilities.

- 58 percent have no visibility into East-West traffic in their data centers or cloud environments.

- 55 percent are dissatisfied with their current attack containment and recovery times.

- 44 percent of those able to share have experienced a breach resulting in the loss of sensitive data.

- 35 percent revealed it takes more than two weeks to implement security change controls.

- 25 percent do not know whether they have experienced attacks.

Shackleford’s conclusions

Traditional security strategies and controls struggle to keep up with the risks facing traditional enterprise models and are inadequate for the challenges they face in trying to address dynamic computing environments

Dave Shackleford

Shackleford’s overarching concern is the increasing popularity of the dynamic data center model. “Enterprises seem to be evolving from traditional IT infrastructure models to a range of newer, often more complex structures, and both enterprise security and distributed computing appear to be at an evolutionary crossroad,” writes Shackleford. “Breach and incident data reported by IT teams suggest that traditional security strategies and controls struggle to keep up with the risks facing traditional enterprise models and are inadequate for the challenges they face in trying to address dynamic computing environments.”

David S. Linthicum, a consultant at Cloud Technology Partners, is in agreement, “The on-premises systems that IT manages are typically a mix of technologies from different eras,” writes Linthicum in his commentary: The public cloud is more secure than your data center. “The aging infrastructure is often less secure — and less securable — than the modern technology used by cloud providers simply because the old, on-premises technology was designed for an earlier era of less-sophisticated threats.”

Linthicum adds, “The mixture of different technologies in the typical on-premises data center also opens up more gaps for hackers to exploit.”

However, Shackleford and Linthicum appear to part company on cloud providers being the end all answer. Shackleford refers to a Cloud Security Alliance (CSA) report, which says: “Fully 80 percent of respondents polled by the CSA for a recently published survey reported their security concerns are serious enough that they are pushing cloud providers for more transparency, improved auditing controls, and better encryption tools.”

Linthicum rebuts that claim saying, “What public clouds bring to the table are better security mechanisms and paranoia as a default, given how juicy they are as targets. The cloud providers are much better at systemic security services, such as looking out for attacks using pattern matching technology and even AI systems. This combination means they have very secure systems.”

Same concern

Linthicum offers this conclusion, “The bottom line is that any system, whether cloud or on-premises, is only as secure as the amount of planning and technology that goes into the data and applications.”

Under closer scrutiny, one begins to see that Shackleford and Linthicum are concerned about the same thing. Both agree that the dynamic data center model with its mix of technologies is vulnerable, and may be more so than either traditional data centers or cloud service providers.

Note: In the interest of full disclosure the Shackleford/SANS survey was sponsored by Illumio, an enterprise security firm.