Being able to respond quickly to changing load demands is crucial for any data center, particularly one specialising in colocation on behalf of a number of clients.

For small and medium data centers, the rack or IT enclosure is typically the standard increment of computing capacity that designers use to estimate the requirements of the facility. At rack level, standardization of components such as blade servers, storage arrays and networking equipment allows extra capacity to be slotted into place with minimum disruption.

Adding increments quickly

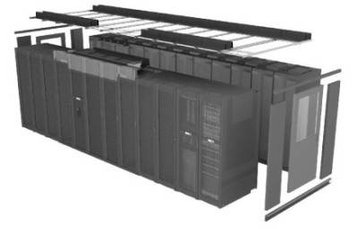

For larger data centers, however, there can often be a demand to deploy more IT capacity quickly and in much larger increments. Hence the appearance of IT pods, or groups of IT racks arranged in one or two rows that share common infrastructure elements including power distribution units (PDUs), containment systems, air handlers and security features.

Although such “pods” can be quickly assembled and installed to ramp up capacity, there is not, as yet, an accepted industry standard to which to design them, so data center operators have developed their own internal standards. There are nevertheless some best practice guidelines that can be followed for a successful design.

Organizations should standardize as much as possible the size and overall architecture of their pods so that integrators can prepare product in advance. The pods can then be stocked as standard configurations to reduce lead time. A free-standing pod frame is recommended to serve as a mounting point for air containment systems and services such as networking, power and even cooling ducts and piping. These can then be built out as needed.

To standardize the design and then deploy a pod-based architecture requires the answers to such questions as how big the pod should be, what should be its power capacity and how long should the rows of racks be? There are three main drivers for determining pod architecture: the choice of electrical power feed; the physical space available and the average rack density.

In most data centers bulk power is brought to PDUs or remote power panels (RPPs) located strategically throughout the data center. As data centers fill up with IT equipment and upper limits on breaker panel space or transformer loading are reached, it sometimes becomes necessary to serve new racks from PDUs located further away than is optimal. This makes operations more complicated and increases the risk of human error and downtime.

Pods, by contrast, have dedicated electrical feeds, but to simplify installation and clarify what can become a complex menu of design alternatives it is a good idea to specify two classes of pod: a Low Power configuration capable of delivering 150kW; and a High Power alternative rated at 250kW. Classifying pods in this manner allows predetermined calculations to be made for the necessary cooling architecture, whether air or liquid-based, to speed up design and roll out.

Make them as big as you can

The size of a pod will largely be determined by the typical space requirements of a facility, taking into account issues such as room shape, building columns and cooling ducting. To make the best use of space, the longest practical Pod for a room should be designed. But the degree of modularity required, the “chunk” or deployment size, is a business decision driven by the rate of growth in load expected of a facility.

Underestimate your expected rack density, because it is much costlier to deploy IT below the data center design density than above. When the installed racks operate at a higher density, you use up your more expensive resources - power and cooling - before the floor space.

Average rack density is a simple calculation of the available power in kW divided by the number of racks. It is recommended that designers should underestimate expected rack density when balancing infrastructure capacity with space because it is much costlier to deploy IT below the data center design density than above. When the installed racks then operate in practice at a higher density you use up your more expensive resource, i.e. power and cooling, first before the floor space.

Apart from the rapid scaling ability, there are many other advantages to deploying pods in large data centers. One could separate critical applications needing more expensive infrastructure such as dual power feeds and more intense cooling facilities from less critical ones at a pod level. Or one could mix and match Open Compute Project (OCP) and traditional servers in the same data center.

All would benefit from a standardized approach to building and rolling out Pod architectures, as long as the key design points are borne in mind.

Robert Bunger is director of data center standards at Schneider Electric. He is co-author of Specifying Data Center IT Pod Architectures available for download on the Schneider Electric site