The massive demand for content and virtualized services is putting enormous pressure on enterprise networks worldwide, and the pace is not expected to slow down anytime soon. Beyond traditional IT network traffic, it’s estimated that more than 20 billion things will be interconnected via the Internet of Things (IoT) by 2020. As the growth of network traffic continues unabated, data centers and enterprises are dealing with infrastructure - and a workforce - stretched to the limits.

Compounding this explosion in data, increasing complexity and cost pressures create further challenges to maintain network performance and reliability. As a result, network managers and CIOs need more efficient and effective processes for testing today’s data center interconnect (DCI) networks, from the physical layer to the application layer, to assure that end-user experience and security are in compliance with service level agreements (SLAs).

A digital deluge

The massive demand for content and a proliferation of connected devices places enormous pressure on enterprise networks - particularly straining data center interconnects that are needed for efficient management, distribution and exchange of data. At the same time, increasing digital transformation and process automation are driving faster DCI development. The key challenge is how to support all of these advances with shrinking IT budgets and fewer resources, while still providing fast, reliable access and satisfactory performance.

As enterprises strive to provide constant connectivity to both operations and consumers, network complexity is accelerated by a variety of applications, system architectures and operational requirements. Furthermore, DCI speeds already are meeting or exceeding 100G, with the expectation that 400G will soon become a clear requirement. Not to mention that work is already taking place at 600G and 800G. As these speeds increase, however, network managers have to maintain this momentum while living within their budgets and power constraints.

Serverless and edge computing technologies are relieving some of this pressure, pushing processing outside of the data center to the edge of the network or the cloud; yet, these options also create additional challenges. Consideration must be given to balancing bandwidth across the network end to end, as well as secure data transmission and storage.

Testing the limits of DCI

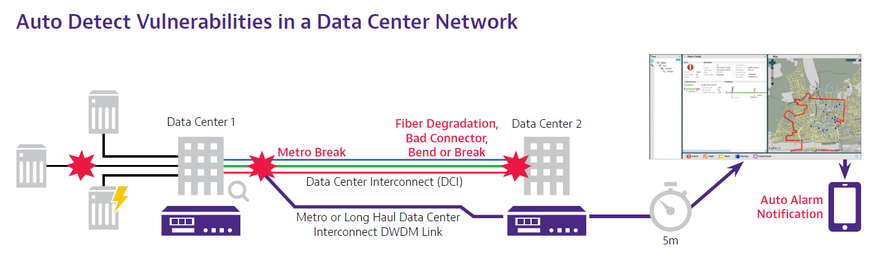

Within the modern data center, there are thousands of links, cables, transponders and connections. While these all represent potential points of failure, they typically receive greater attention than those incoming links which feed the data center. It may come as a surprise that many DCI networks are not routinely tested, despite the fact that doing so enables faster troubleshooting and, even more importantly, the power of prevention. Regular network test and monitoring is a critical factor in meeting SLAs and internal performance objectives.

In an effort to meet the continuing demand for more bandwidth, some data center managers are creating 200G wavelengths using DP-16QAM modulation, essentially doubling the DCI capacity over the same fiber. While this technology helps reduce bottlenecks, it’s important to test these new 200G links before adding live traffic to the system, since there may be limitations on a particular wavelength that prevent it from achieving a 200 Gbps transmission rate. These limitations cannot be identified without first stress testing the wavelength before putting it into service.

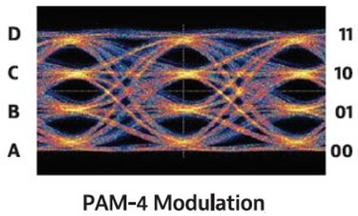

Looking beyond 200G, we have already begun the evolution to 400G, representing a paradigm shift throughout the networking ecosystem that provides flexibility and scalability in new and unique ways. However, 400G technology also brings inherent challenges in testing due to added complexity at the physical layer. The utilization of PAM4 modulation increases link errors, so simply quantifying the errors or testing based on “zero” errors no longer suffices. A more sophisticated understanding of error distribution and statistics is required.

To mitigate these challenges, forward error correction (FEC) is used in 400G technology for an effective error-free link at the packet level. New, more insightful testing is required to validate margin and diagnose issues through the coding and PAM4 modulation. No longer can network testing be confined to just one of the layers — it must cover the link from the physical layer all the way through to Ethernet.

As DCI networks migrate to higher speeds, it’s also important to run simultaneous test modules that compare and evaluate the results and performance of open application programming interfaces (APIs) and protocols, including NETCONF/YANG on racks at high speeds of 100G, 200G and 400G. Doing so can help to pinpoint potential issues and troubleshoot infrastructure complications before they arise, and most importantly, using network automation mechanisms that helps reducing human intervention in some scenarios.

Stress reduction

Ultimately, it’s important to maintain robust test practices throughout the entire DCI lifecycle in order to optimize performance, reduce latency, ensure reliability and maximize capacity utilization. To assess DCI connections, stress testing should be done on a consistent basis, with the goal of identifying potential issues before a fault occurs. With regular test and measurement practices, engineering teams can identify issues that are impeding the network’s ability to achieve full capacity, allowing such issues to be resolved much faster and with less headache.

Moreover, in today’s increasingly virtualized and cloud-based networks, DCI network monitoring needs to be automated and virtualized to provide the capability to monitor, diagnose and resolve anomalies across the entire network infrastructure. However, the fiber networks they rely on also still require robust testing from end-to-end to maintain peak performance.

Conclusion

As enterprises continue to grapple with increased data and an exponential increase in devices and connected things, the advent of 200G and 400G technology enables DCI networks to keep pace with ever-increasing expectations for high-speed, seamless performance. To make this evolution as smooth as possible, it’s vital to apply rigorous test and measurement practices to DCI networks, ensuring that they deliver the efficiency, flexibility and performance necessary to meet SLA requirements, today and tomorrow.