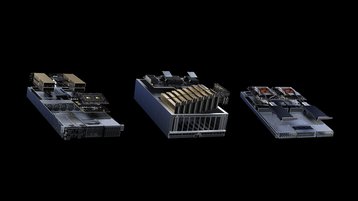

Chip giant Nvidia has unveiled a new modular reference architecture with more than 100 server variations for different compute and networking requirements.

The Nvidia MGX server specification has been adopted by ASRock Rack, ASUS, Gigabyte, Pegatron, QCT, and Supermicro. The trillion-dollar GPU maker claimed that MGX can slash development costs by up to three-quarters and reduce development time by two-thirds to just six months.

“Enterprises are seeking more accelerated computing options when architecting data centers that meet their specific business and application needs,” said Kaustubh Sanghani, vice president of GPU products at Nvidia.

“We created MGX to help organizations bootstrap enterprise AI, while saving them significant amounts of time and money.”

Manufacturers start with a basic system architecture, and then select their GPU, CPU, and DPU, and then tweak the design for the specific workload needs.

QCT and Supermicro will launch MGX designs this August, with QCT’s S74G-2U system using the GH200 Grace Hopper Superchip and Supermicro’s ARS-221GL-NR system featuring the Grace CPU Superchip.

The architecture is different from the Nvidia HGX, which uses high-bandwidth NVLink-connected, multi-GPU baseboards, which are good for high-end HPC and AI workloads. MGX is less proprietary, and easier for system builders to reuse existing designs.

MGX is compatible with the Open Compute Project and Electronic Industries Alliance server racks.