Buoyed by accelerating digitalization and a swelling middle class hooked onto the Internet, Asia Pacific is expected to top North America in new data center builds over the next decade. Unfortunately, a large swathe of Asia falls within the tropical climatic zone and a higher year-round temperature that does not lend itself well to ambient cooling strategies.

At a time where environmental consciousness is growing, is energy efficiency in the tropics a lost cause? PS Lee, founder of Singapore start-up CoolestDC, believes that liquid cooling is an overlooked technology that can give enterprises and data center operators in tropical locations a sustainability boost with substantially reduced energy consumption.

Liquid-cooling for the mass market

Lee is also the program director of Cooling Energy Science and Technology at the National University of Singapore (NUS), who previously spoke with DCD about a high efficiency “oblique fin” cold plate that he designed for liquid cooling microprocessors. After initial lab tests yielded positive results, he worked to commercialize the technology.

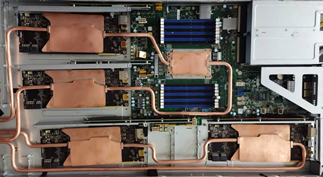

In collaboration with computer manufacturer Tyan, the team modified one of the company's commercially available servers for liquid cooling. With one AMD 32-core Epyc and four Nvidia RTX 2080 CPUs per 1U server, nine units of unmodified systems installed in a standard rack were pitted against a similar number of liquid-cooled servers equipped with a rear-door heat exchanger.

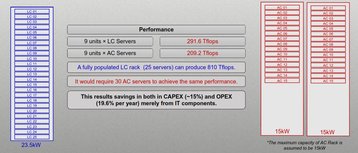

Lee says initial tests showed that a 10kW rack can offer a 30 percent reduction in power consumption over traditional air-cooled servers. From the results, Lee sees an opportunity to leverage liquid cooling in the mass market starting with medium density deployments.

More work, less power

The ever-growing thermal design power (TDP) of CPUs and the increasing use of GPUs in recent years have put liquid cooling in the spotlight. Historically, liquid cooling is reserved for high-performance computing applications or high-density deployments. Lee says his liquid-cooled servers not only consumed less power than air-cooled ones but performed better, too – and is hence ideal for more traditional workloads.

The reduction in power consumption stems from the removal of high-speed fans and blowers used to move the prodigious amount of air needed to keep temperatures down. With 10 fans or more per server, fans can draw up to 15 percent of what the server consumes, says Lee. While this is old news to proponents of liquid-cooling, Lee says liquid-cooling can also help servers to perform better by overcoming the effects of thermal throttling.

Charts that Lee shared with DCD showed fluctuating performance for air-cooled servers as thermal throttling kicked in to prevent overheating. On the other hand, the liquid-cooled servers not only performed more consistently but were able to operate at 100 percent of their rated clock speed loading compared to an average of just 80 percent for the air-cooled ones.

“When we first started looking at commercializing our technology, the main delta was PUE and cooling energy consumption. However, we soon discovered that IT cooling equipment performance can be greatly enhanced,” said Lee. “We had always focused on overall cooling performance but not the performance of the cooling equipment. Many don’t realize that they [might not be] fully unlocking the specifications that they paid for.”

Roadblocks to liquid cooling

If server utilization can increase by them running at peak performance all the time, then perhaps fewer servers are needed. Coupled with the energy reduction, this would make liquid-cooling a must-have, surely?

Of course, liquid-cooling is inherently more expensive to implement, and even a minor leak can damage or destroy a lot of very expensive equipment. Lee acknowledged that deploying liquid cooling will require trained system integrators or specialists to ensure system reliability, though he says it is manageable with good design.

“The risk of leakage is not a major concern as liquid cooling has been widely used in other applications including automotive and airspace. To mitigate the risk, we use ruggedized connectors and the fittings that comply the industrial standard which can withstand high temperature and pressure.”

“We recently developed a new design with copper tubes brazed with the cold plates. Liquid cooling loops within sensitive components are leak-free and the connection with the main inlet and outlet is isolated from this region.”

Lee does not expect a switch to liquid cooling overnight: “Data center operators prefer traditional air cooling as they are concerned about the operational complexity and capital costs associated with the liquid cooling solutions. The perception that switching to liquid cooling requires a major overhaul in the brownfield data center still exists.”

Next steps

Businesses looking to consider liquid cooling will do well with a phased approach beginning from a pilot deployment, according to Lee. Indeed, he suggests that data center operators can differentiate themselves by embracing liquid cooling.

“Data center operators typically only provide space and power. But if we want the industry to be more sustainable, one possibility is for data center operators to offer some form of guarantee on energy savings and performance – assuming customers pick from a list of validated equipment.”

For now, Lee says the team is currently working on fine-tuning the solution, with a formal product launch expected end-Q3 or early Q4 this year.

“We have had interest from data center operators [looking to] develop their facility to support liquid cooling system as well as server manufacturers who are providing full warranty to integrate our cooling system in their servers,” said Lee.