This feature appeared in the January issue of DCD Magazine. Subscribe for free today.

In 1971, Intel, then a manufacturer of random access memory, officially released the 4004, its first single-chip central processing unit, thus kickstarting nearly 50 years of CPU dominance in computing.

In 1989, while working at CERN, Tim Berners-Lee used a NeXT computer, designed around the Motorola 68030 CPU, to launch the first website, making the machine used the world’s first web server.

CPUs were the most expensive, the most scientifically advanced, and the most power-hungry parts of a typical server: they became the beating hearts of the digital age, and semiconductors turned into the benchmark for our species' advancement.

Intel's domination

Few might know about the Shannon limit or Landauer's principle, but everyone knows about the existence of Moore’s Law, even if they have never seated a processor in their life. CPUs have entered popular culture and, today, Intel rules this market, with a near-monopoly supported by its massive R&D budgets and extensive fabrication facilities, better known as ‘fabs.’

But in the past two or three years, something strange has been happening: data centers started housing more and more processors that weren’t CPUs.

It began with the arrival of GPUs. It turned out that these massively parallel processors weren’t just useful for rendering video games and mining magical coins, but also for training machines to learn - and chipmakers grabbed onto this new revenue stream for dear life.

Back in August, Nvidia’s CEO Jen-Hsun ‘Jensen’ Huang called AI technologies the “single most powerful force of our time.” During the earnings call, he noted that there were currently more than 4,000 AI start-ups around the world. He also touted examples of enterprise apps that could take weeks to run on CPUs, but just hours on GPUs.

A handful of silicon designers looked at the success of GPUs as they were flying off the shelves, and thought: we can do better. Like Xilinx, a venerable specialist in programming logic devices. The granddaddy of custom silicon, it is credited with inventing the first field-programmable gate arrays (FPGAs) back in 1985.

Applications for FPGAs range from telecoms to medical imaging, hardware emulation, and of course, machine learning workloads. But Xilinx wasn’t happy with adopting old chips for new use cases, the way Nvidia had done, and in 2018, it announced the adaptive compute acceleration platform (ACAP) - a brand new chip architecture designed specifically for AI.

“Data centers are one of several markets being disrupted,” CEO Victor Peng said in a keynote at the recent Xilinx Developer Forum in Amsterdam. “We all hear about the fact that there's zettabytes of data being generated every single month, most of them unstructured. And it takes a tremendous amount of compute capability to process all that data. And on the other side of things, you have challenges like the end of Moore's Law, and power being a problem.

"Because of all these reasons, John Hennessy and Dave Patterson - two icons in the computer science world - both recently stated that we were entering a new golden age of architectural development."

He continued: “Simply put, the traditional architecture that’s been carrying the industry for the last 40 to 50 years is totally inadequate for the level of data generation and data processing that’s needed today.”

“It is important to remember that it’s really, really early in AI,” Peng later told DCD. “There’s a growing feeling that convolutional and deep neural networks aren’t the right approach. This whole black box thing - where you don’t know what’s going on and you can get wildly wrong results, is a little disconcerting for folks.”

A new approach

Salil Raje, head of the Xilinx data center group, warned: “If you’re betting on old hardware and software, you are going to have wasted cycles. You want to use our adaptability and map your requirements to it right now, and then longevity. When you’re doing ASICs, you’re making a big bet.”

Another company making waves is British chip designer Graphcore, quickly becoming one of the most exciting hardware start-ups of the moment.

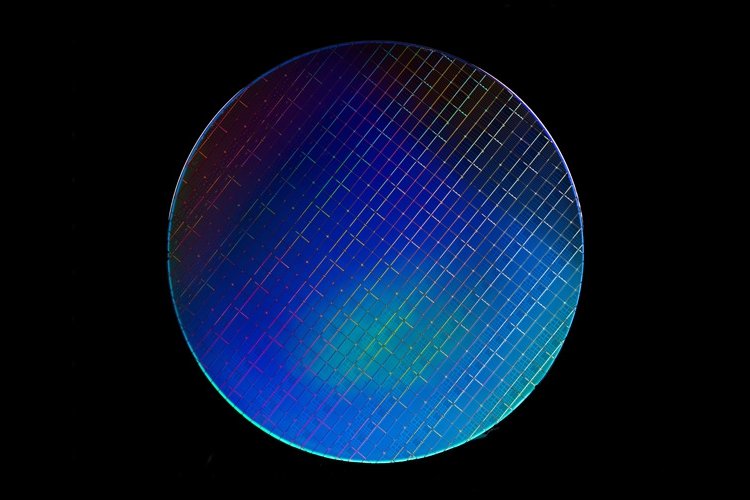

Graphcore’s GC2 IPU has the world’s highest transistor count for a device that’s actually shipping to customers - 23,600,000,000 of them. That’s not nearly enough to keep up with the demands of Moore’s Law - but it’s a whole lot more transistor gates than in Nvidia’s V100 GPU, or AMD’s monstrous 32-core Epyc CPU.

“The honest truth is, people don’t know what sort of hardware they are going to need for AI in the near future,” Nigel Toon, the CEO of Graphcore, told us in August. “It’s not like building chips for a mature technology challenge. If you know the challenge, you just have to engineer better than other people.

“The workload is very different, neural networks and other structures of interest change from year to year. That’s why we have a research group, it’s sort of a long-distance radar.

"There are several massive technology shifts. One is AI as a workload - we’re not writing programs to tell a machine what to do anymore, we’re writing programs that tell a machine how to learn, and then the machine learns from data. So your programming has gone kind of ‘meta.’ We’re even having arguments across the industry about the way to represent numbers in computers. That hasn’t happened since 1980.

“The second technology shift is the end of traditional scaling of silicon. We need a million times more compute power, but we’re not going to get it from silicon shrinking. So we’ve got to be able to learn how to be more efficient in the silicon, and also how to build lots of chips into bigger systems.

“The third technology shift is the fact that the only way of satisfying this compute requirement at the end of silicon scaling - and fortunately, it is possible because the workload exposes lots of parallelism - is to build massively parallel computers.”

Toon is nothing if not ambitious: he hopes to grow “a couple of thousand employees” over the next few years, and take the fight to GPUs, and their progenitor.

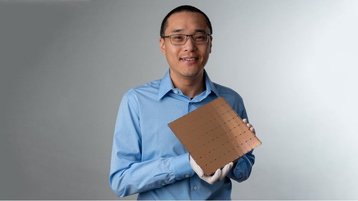

Then there’s Cerebras, the American start-up that surprised everyone in August by announcing a mammoth chip measuring nearly 8.5 by 8.5 inches, and featuring 400,000 cores, all optimized for deep learning, accompanied by a whopping 18GB of on-chip memory.

“Deep learning has unique, massive, and growing computational requirements which are not well-matched by legacy machines like GPUs, which were fundamentally designed for other work,” Dr. Andy Hock, Cerebras director, said.

Huawei, as always, is going its own way: the embattled Chinese vendor has been churning out proprietary chips for years through its HiSilicon subsidiary, originally for its wide array of networking equipment, more recently for its smartphones.

For its next trick, Huawei is disrupting the AI hardware market with the Ascend line - including everything from tiny inference devices to Ascend 910, which it claimed is the most powerful AI processor in the world. Add a bunch of these together, and you get the Atlas 900, the world's fastest AI training cluster, currently used by Chinese astronomy researchers.

And of course, the list wouldn’t be complete without Intel’s Nervana, the somewhat late arrival to the AI scene. Just like Xilinx and Graphcore, Nervana believes that AI workloads of the future will require specialized chips, built from the ground up to support machine learning, and not just standard chips adopted for this purpose.

“AI is very new and nascent, and it’s going to keep changing,” Xilinx’s Salil Raje told DCD.

“The market is going to change, the technology, the innovation, the research - all it takes is one PhD student, to completely revolutionize the field all over again, and then all of these chips become useless. It’s waiting for that one research paper.”