As California’s forests glowed, lights at NERSC began to switch off.

For the second time in as many weeks, one of the world’s most powerful supercomputers was carefully being shut down.

Climate change, once an abstraction being dispassionately simulated on the 30 petaflops Cori system, had manifested into reality.

The machine, a pinnacle creation of mankind - a species that prioritized rapid progress over sustainable development - had been waylaid by the impact of that progress on the world.

Like the families that prepared to flee California’s suburbs, Cori could do nothing to stop the effects of anthropogenic climate change. It was truly powerless.

This feature appeared in the January issue of DCD Magazine. Subscribe for free today.

California on fire

In October, after an unusually long dry spell, remarkably high winds, and arid conditions, utility PG&E grew increasingly concerned a massive wildfire could be caused by a broken power line.

Partly responsible for the deadliest and most destructive wildfire in Californian history, 2018’s Camp Fire - which cost $16.5 billion and led to at least 85 civilian deaths - PG&E currently faces bankruptcy and a potential state takeover.

While climate change has exacerbated the causes of wildfires, and will increasingly make things worse, PG&E has been criticized for its lack of preparation, not clearing trees and brush from around power lines, and not having enough emergency staff.

This year, PG&E decided the best way to mitigate the risk of its grid sparking another fire was to switch off parts of that grid, preemptively shutting down power to nearly three million people in central and Northern California during this fire season.

Still, despite the precautions, some fires raged, including the Kincade Fire that burned 77,758 acres in Sonoma County.

Among those caught up in two separate multi-day power cuts was the Lawrence Berkeley National Laboratory (LBNL), home to the National Energy Research Scientific Computing Center (NERSC) and an unlucky Cori, the thirteenth most powerful supercomputer in the world.

“There was some warning,” Professor Katherine Yelick, associate laboratory director for computing sciences at LBNL, told DCD. “It was about five hours.”

It takes around two to three hours to shut down Cori, NERSC director Dr. Sudip Dosanjh said.

“If there's a sudden power outage, you can have issues with the system, maybe some parts fail, so just to be on the safe side, we decided both times to go ahead and bring the big system down.”

NERSC has uninterruptible power supplies and on-site generators, “but that's not enough to power Cori,” which consumes 3.9MW. “It's enough to power the network and some file systems,” Dosanjh said.

“So we kept the auxiliary services up during the entire outage, including the network, and something we call Spin,” which can be used to deploy websites and science gateways, workflow managers, databases and key-value stores.

Those systems could have stayed up indefinitely, as long as there is enough available fuel to refill the generators’ day tanks every six to eight hours.

The first shutdown, beginning on October 10, “was the first time that something like this had actually happened,” Yelick said. The lab had emergency procedures in place for similar events, but “there's a big difference between having a plan in place and then having to execute it,” she admitted. “We did have a plan, but it wasn't as though this was really expected.”

NERSC “certainly learned a lot during the first shutdown that helped with the second,” which began on October 26, she said. “We learned about communications and the generators and how each one works - those kinds of things.”

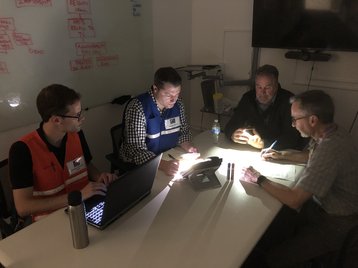

Another crucial lesson was how many people Emergency Operations Center (EOC) fielded to deal with LBNL’s power cut, which also took down other science resources, including the DNA sequencing lab, the Molecular Foundry, and the Advanced Light Source.

“[The first time] we didn't have a large number of people that were cycling through the EOC,” Yelick said. “And so I think they got pretty tired. We added some additional people the second time. [In future] we would want to make sure that there's enough people that are able to bring the systems up and are confident of doing it on their own, so that we don't overly fatigue a small group of people.”

The second time, NERSC was even able to do some maintenance, doing tests on the upcoming community file system ‘Storage 2020.’

Roughly 100 personnel were involved with the emergency operations at the lab, of which around 20 were actually on site. “We're trying to collect that list of exactly how many people were involved right now,” Yelick said. Cori itself only had a few staffers working on it during the shutdown and return, including employees from the supercomputer’s manufacturer, Cray.

The process of returning everything online after power came back took six to eight hours both times.

With various teams interacting, many of them working remotely, communications infrastructure was a key concern. Luckily, cell towers and Internet connectivity mainly stayed online during both outages.

“We were using cell phones,” Yelick said. “That's one of the things that we added in the second outage. And most people work pretty hard to find some way of communicating if they can't, even if it means driving someplace.”

“Long before the power went off, the emergency response teams were using email, text alert, their Slack channel, Twitter,” Computing Sciences Area communications manager Carol Pott said.

“They set up a website and other communications options for people to get the latest alerts. They were trying to cover as many bases as possible to communicate with people who might not have access to the Internet or had other limitations.”

Dosanjh added: “Now, if there were a broader outage - one that affected the entire East Bay, for example - that would be more problematic for all the staff just in terms of being able to get access to things.”

Communication travels both ways, and NERSC’s efforts to keep services online prompted an outpouring of encouragement from many of the 7,000 researchers that use its systems. “I was really pleasantly surprised at all the emails and support that we got from the community,” Dosanjh said.

“The staff worked very hard, they're very, very dedicated to the lab's mission, which is to further human knowledge of science.”

Preserving the mission

One of the many cruel ironies of the shutdown of Cori was that it is one of the tools necessary to fight the ravages of a planet off-kilter.

One workload on Cori may be simulating energy storage solutions that help us break free from our addiction to fossil fuels. Another may be studying the impact of our seemingly inevitable inability to escape our addictive nature.

Cori has been calculating how high the seas will rise, and how large the tornadoes could grow. Just one week before Cori’s first shutdown, it was actually simulating how the forests would burn.

"Results from the high-resolution model show counterintuitive feedbacks that occur following a wildfire and allow us to identify the regions most sensitive to wildfire conditions, as well as the hydrologic processes that are most affected," an October paper studying Camp Fire by LBNL researchers Erica R. Siirila-Woodburn and Fadji Zaouna Maina states.

The Department of Energy “does a lot of simulations of Earth systems,” Yelick said. “So, simulating climate change, as well as looking at alternative materials for solar panels, materials for batteries, and a lot of different aspects of energy solutions.”

Some of this work was delayed by the two outages, pushing back valuable research efforts. "At the end of the year, yes there was some lost time for sure," Yelick said, but she stressed that no data was lost, and that due to the normal backlog of jobs to run on NERSC systems, it "for the most part just changes the delay that people were expecting."

But NERSC does support some areas of scientific research where time is everything. “There's several where it's a major deal,” said Peter Nugent, LBNL scientist and an astronomy professor at Berkeley. “The ones that the Department of Energy is involved with a lot are at the Light Sources - these are very, very expensive machines that they run and scientists get a slot of time and it can be anywhere from a half a day to a few days. And that's it.

“If they don't have these capabilities there for them, they lose their run. That's a huge expense and a huge loss. But because of the nature of the detectors that they're running there, gathering more and more data, it's not possible for them to process it locally and do everything they want. They need to stream it to one of these HPC centers and get things done.”

Nugent’s work is also incredibly time-sensitive. “The research that I'm involved with right now uses supercomputers to search for the counterparts to the gravitational wave detections that the LIGO/Virgo collaboration is making,” he said.

Nugent - senior scientist, division deputy for science engagement, and department head for the computational science department in the computational research division at LBNL - crunches data from the Virgo interferometer in Italy when it spots gravitational wave events, and then tries to capture details on the four-meter Victor Blanco telescope in Chile.

There’s a problem, however: The gravitational wave discovery “usually comes with a large uncertainty in the sky as to where it would be,” so Nugent has to “start taking a bunch of images to follow these events up, then stream this data up to the NERSC supercomputers to process it,” and then take more images, as he hunts for signs of the event.

“Time is of the essence, these are transient events - they fade very rapidly in the course of 24 hours, so we have to get on them immediately. We have to do this search right away. It's a tremendous amount of data.”

When successful, the information gathered can yield important scientific insights. “These are very interesting new discoveries,” Nugent said. “This is the merger of black holes and neutron stars, the latter of which has led to the discovery of where all the elements that are very high on the periodic table - gold, platinum, silver - come from.

“So when somebody calls you up and tells you 'Oh, by the way, the computers are going to be all down.' You're like, 'Oh crap, what can we do?'”

Oh crap

Thankfully, just a few months before, Nugent’s team had already begun to prepare for Cori going down - although at the time, they were thinking of scheduled maintenance.

“We were like ‘what happens if an event goes off during those two days that they're down, what can we do?’ Nugent said. “And so we've looked at porting our entire pipeline to a cluster of computers that are run by the IT department at LBNL, known as Lawrencium.”

To pull this off, Nugent’s team had already put its code in Dockerized containers, making porting to different systems easier. “We did that earlier in the summer when NERSC was down for maintenance, and it worked out really well.

"But then this next thing came up, and we couldn’t use Lawrencium because it [would also go down] when PG&E shut off the power.”

The researchers turned to Amazon. “We applied for and received a special educational grant that gave us compute time over there,” Nugent said.

“And we were able to - with enough advance notice of when this is going to happen - push all of our data, our reference data and our new data to AWS.”

The process worked, but “was sort of last minute,” Nugent said. “It's a real pain, but we managed to get it done and keep it going.”

With more time now, Nugent’s team are looking at other cloud and cloud-like services. “We would love to run it at NERSC all the time, but now we have a backup plan for when this occurs and we’re looking at making it so that it naturally just turns over and goes from one service to another, depending upon the status.”

Commercial providers could form a part of the solution, but Nugent hopes to use government systems where possible. “The Department of Energy runs some smaller clusters, so we're going to talk with them about how we could set something like this up in the future,” he said.

“This is something that the DOE is certainly very invested in making happen, because sometimes there are bugs and they have to take systems down.

“Experimentalists come to rely on these HPC centers more and more for doing their data processing, so they will need to have the capability to shift from one place to another.”

He, like many in the HPC community, envisions the ‘super facility,’ a virtual supercomputer that takes advantage of the best aspects of different HPC deployments and services and combines them.

“It's this idea that you can use the networking and the streaming of the data to a different resource and process it where you want.”

The super supercomputer

That may take time, by which point NERSC could be home to another huge supercomputer, the 100 peak petaflops Perlmutter system, expected to draw more than 5MW when it launches in late 2020.

The system is named for Saul Perlmutter, who won the 2011 Nobel Prize in Physics for observations of supernovae which proved that the expansion of the Universe has been accelerating. “Saul Perlmutter was the guy who hired me out at the lab back in '96,” Nugent said.

Perlmutter - the person - is currently looking for more distant supernovae: “Now we have a computer named after somebody who is hunting for the same type of explosions in space that we started with some 20 or so years ago,” said Nugent. “It’s come full circle.”

By the time it comes online, or when the ~20MW exascale NERSC-10 system launches in 2024, it is not clear how regular grid power cuts will become.

"It is during a very limited period of time where this is an issue," NERSC's Dosanjh said.

"Almost all of our rain occurs between November and April, so it's really primarily an issue in October and November.

"It's not something we worry about every day, but there are certainly - as we've learned - occasions where you can be dry, six months after the rain, and there's high wind and high temperatures.”

PG&E has warned that it might use preemptive blackouts on its millions of customers for up to a decade, as it catches up on maintenance it should have undertaken years ago. Communities will have to prepare for sudden outages and fear potential fires.

But the danger with what happened in California is perhaps not just that of loss of life or property. It is a loss of perspective. It is the danger that this becomes the new normal.

“We don't want that,” Nugent said. “We really, really don't want that.”

His hope is that “necessity is the mother of invention,” and that the impact of climate change “will get us to do some interesting things because of this.”

Within Cori, and its successors, small slivers of replica worlds full of interesting things wink into existence. A weather system here, a turbine engine there. Perhaps in one there is a world where this works out, where a pathway out of destruction is found.

But Cori can’t get us there, it can’t change consumption habits, or craft policy proposals.

No matter the state of the grid, it can’t change the world beyond its racks. That is our world.

We have the power.