The history of high performance computing (HPC) can be told through the stories of a few key supercomputers whose impact reverberated through an industry marked by the rise and fall of companies racing to build the most powerful systems in the world.

Red Storm is one such system. When the supercomputer was decommissioned in the summer of 2012, the president and CEO of its creator, Cray Inc, summed up what made this particular machine so important.

“Without Red Storm I wouldn’t be here in front of you today,” Peter Ungaro said. “Virtually everything we do at Cray - each of our three business units - comes from Red Storm. It spawned a company around it, a historic company struggling as to where we would go next. Literally, this program saved Cray.”

The machine that saved Cray

To understand what Ungaro meant, we must go back to February 1996, when the American supercomputing manufacturer was acquired by Silicon Graphics (SGI) for $740 million.

Cray was working on the T3E, its second-generation massively parallel supercomputer architecture (after the T3D) and “SGI basically took most of the folks that were working on the T3E project and redirected them to extending the microprocessor-based line that SGI was building,” Cray’s chief technology officer, Steve Scott, told DCD.

Four years later, “SGI spun Cray off and they kept all of the parts of the company that were working on massively parallel processors using microprocessors,” Scott said. “The rest of us became Cray Inc, which is the company that we are today.”

The new entity, which was “relatively small at the time, with revenues of under $200m,” was left working on the X1 and X2 systems - commercially limited products propped up with funding from the NSA - and faced the very real possibility of extinction.

“So that was the environment that we were in back in 2001 when Sandia National Laboratories first started talking to us,” Scott said.

But the relationship between the US Department of Energy’s Sandia Laboratories and Cray got off to an inauspicious start. “The first meeting between Sandia and the bidders - there were two - was on September 11th, 2001,” John Noe, department manager at Sandia, told DCD.

The laboratory, one of three National Nuclear Security Administration research and development facilities, was put on high alert. “That was an interesting day, because we’d started the meeting on the base, and eventually we had to be taken off the base,” Noe said.

“And neither one of the bids was what we were after in the end, it didn’t look too promising,” he added. Cray had tried to pitch its X1 and X2 line, but Sandia wasn’t interested, telling them that linear processors were not the future.

With airports closed, “a bunch of us ended up driving home in a rented van across the country from Sandia to Wisconsin after that,” Scott said.

The fate of Cray, a company whose founder Seymour Cray was known as the father of supercomputing, hung in the balance.

Keeping a market alive

Over the course of a year, and after a lot of meetings, Sandia and Cray slowly grew closer, ultimately agreeing to develop what would become Red Storm - a system more akin to what the old Cray had achieved with the T3D and T3E and what Sandia and Intel had done with their ASCI Red System, the first computer built under the Accelerated Strategic Computing Initiative.

But, while Red Storm was planned to be a massively parallel processing machine in the same vein as ASCI Red, that system had left Sandia worried that it was witnessing dangerous consolidation in the HPC marketplace that would reduce the amount of competition, and leave any supplier vulnerable to the whims of large customers.

“Intel’s supercomputer system division had basically gone out of business as they delivered ASCI Red to us and it was the last and only of its kind,” Noe said. “The lesson learned from that was that we wanted to make a commercial platform available, based on our technology, and so as part of the contract we made sure that Cray was going to sell it.

“We wanted to continue creating choices in the marketplace, having more than just one vendor option to go to, in the future, was part of our thinking.”

Dave Martinez, project lead for infrastructure computing services at Sandia, concurred: “At the time there was IBM, and I think Red Storm put the competitive nature back into the HPC program and it modernized the way partnerships are formed and how projects are accomplished.

“It was a milestone,” he told DCD.

With Cray in a tough place financially, this meant that Sandia had to rewrite its approach to contract negotiations. “The money they were getting from Red Storm essentially was their only cash flow,” Noe said.

“We had to work very closely with them to make sure they were still viable and able to pay their people and buy their parts and so forth, whilst making sure we still received some value in the case something went wrong.”

Instead of the usual small payment upfront and large payment at the end, Sandia “assigned intermediate deliverables to pay Cray, which kept them afloat and gave us some confidence that we weren’t sinking a whole lot of money into something that we wouldn’t get value back for,” Noe said.

With the contract signed, both teams got to work: “The whole system was designed, really, from architecture to delivery, in just under 20-24 months,” Scott said. “And this is really quite remarkable because it normally takes a lot longer than that to design a system.”

As they planned out the project, Sandia had to simultaneously prepare the shell to house the upcoming system. “We had no idea, when we built the data center, what kind of equipment we were going to place in there, so we basically built the shell for a relatively low price,” Martinez said.

“Not knowing what the future might bring, we decided to build it with larger pipes, thinking about liquid cooling in the future, and possible low airflow pressurization types.”

But Red Storm ended up relying on air cooling. “Back in the day we just had centrifugal fans, and they basically throw air like 60 feet - there was like 80 feet to the middle of the system.”

Martinez found inspiration at a local power company that was experimenting with plug fan technology. “It was a first one of its kind,” he said. Working in partnership with HVAC company Johnson Controls, Martinez flew to Ireland to test out air conditioning firm EDPAC’s fan units and came up with a concept of pressurizing the floor, working all of the CRAC units in unison, circling around the Red Storm computer.

The motor on the unit was not designed with that in mind, however, so Martinez flew to Milwaukee to use ABB’s latest drive, which was still in the test phase. After some tinkering, telling EDPAC to change some of its “control schemes to cold site control instead of return air,“ and instructing them to adopt ABB’s drives, Sandia found success.

“That’s one of the first big data centers to be cooled by plug fan cooling,” Martinez said. “Now, basically 95 percent of big data centers, if they have CRAC units, are cooled by using plug fans. The centrifugal fan has been replaced because it’s not as accurate at cooling as a plug fan.”

This concept allowed Sandia to cool “the largest powered machine we’d ever seen,” at about 40kW per rack, “quite comfortably.”

Getting it running

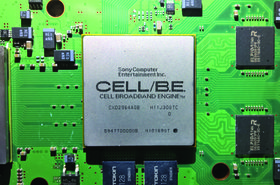

With a shell built, Sandia and Cray started installing the system and linking the components together. “It was a 3D Torus interconnect with a custom router chip called SeaStar that Cray designed. That network was using a little over three gigabits per second signaling rate,” Scott told DCD. “So it was competitive with the InfiniBand networks of the day.”

Red Storm used AMD Opteron processors, with an initial installation of 140 cabinets, taking up 280 square meters (3,000 sq ft) of floor space, operating on “a microkernel that was largely developed by Sandia with Cray involvement called Catamount.”

With a fast turnaround of less than two years, the system “had a very… let’s call it ‘fresh’ software stack,” Scott said. “And so it was not the most stable machine and we proceeded to spend the next two years hardening it into a much more production-worthy machine.”

He added: “Eventually, for example, the microkernel evolved to using Linux and we developed what’s known as Compute Node Linux which is essentially the line of scalable operating systems that we still ship today.” This operating system, Scott said, allowed Cray to corner the lucrative HPC market in weather forecasting, which requires high operational reliability.

Red Storm also saw several hardware improvements. It launched with a theoretical peak of 41.47 teraflops in 2005, was upgraded to 124.42 teraflops in 2006 and to 284.16 teraflops in 2008, as AMD quickly iterated its Opteron line from single, to dual and finally to quad-core processors.

“Because of the scalability of the design, it was certainly one of the seminal events in our growth from teraflops to petaflops,” Noe told DCD. “We effectively demonstrated that with Red Storm.”

The adoption of Opteron chips also served as “a bit of a wake-up call to Intel,” Scott said, “they were really not focused on meeting the needs of the HPC community during the early 2000s, while AMD moved to 64-bit addressing, integrated memory controllers and integrated network ports. All three of those things were very soon thereafter taken up by Intel.”

As it silently set about reinvigorating competition in the chip and supercomputing space, Red Storm was also working on other things - secret things. The computer’s classified work is described by the laboratory as solving “pressing national security problems in cyber defense, vulnerability assessments, informatics (network discovery), space systems threats and image processing,” as well as simulations involving nuclear weapons research.

One classified project was made public, however - Operation Burnt Frost.

After the US lost control of a military reconnaissance satellite soon after its launch in late 2006, concerns were raised over whether it would accidentally spray a cloud of highly toxic hydrazine fuel over whichever country was unlucky enough to serve as USA-193’s crash site.

It took until January 8, 2008 for the Burnt Frost program to be created, with a decision to shoot down the satellite taken soon after. The US Navy and the Aegis Ballistic Missile Defense then had just a few weeks to pull it off. They turned to Red Storm.

The supercomputer versus the satellite

Sandia simulated thousands of variations of strikes against the bus-sized satellite traveling 17,000 miles per hour, 153 miles above Earth, before finally getting the approval from President George W. Bush to proceed with a takedown. Launched on 20 February, the strike was a total success - although it received criticism from China and Russia which claimed the whole exercise was a cover-up to test an anti-satellite weapon.

Lt. General Henry A. Obering III, the director of the Missile Defense Agency, said in a promotional video for the initiative: “Not only can we hit a bullet with a bullet, we can hit a spot on the bullet with a bullet.”

Red Storm was also involved in unclassified projects, including climate modeling. ”They wanted to be absolutely certain that there was no way for code running in the open partition to read any data or see any activity or code that was running in the classified position partition,” Scott said.

“And so they asked us to build a couple of large sets of switches that would allow us to mechanically disconnect the interconnect.”

With previous systems, such as Intel’s Paragon, moving between classified and unclassified was a risk because they were not “designed to be switchable,” Noe said.

“We had to do some operational things to be able to move it back and forth between different security realms - and, unfortunately, that involved disconnecting pieces and parts and turning it off, which is never a good thing to do with a big computer. So we would lose component parts every time we tried to switch it back and forth.”

To address this, Sandia experimented with backplane disconnect units for ASCI Red, but took the idea a step further with Red Storm. The laboratory and Cray created a ‘Red-Black Switch,’ which allowed the system to fully run classified or unclassified material, or be split to run each simultaneously - with a physical ‘air gap’ ensuring security.

“The reconfiguration architecture that Red Storm embodied was quite remarkable,” Noe added.

After a long life of both classified and unclassified simulations, the system was decommissioned in 2012. But its legacy is still visible in the industry.

As part of its efforts to ensure the HPC market stayed competitive, “the contract specifically called for Cray to sell a system derived from Red Storm as a commercial product,” Noe told DCD.

And, indeed, Cray did - selling the system as the commercially successful XT3. That was followed by the XT4 and XT5, before being replaced with the XE system in the late 2000s and the Cray XC system in 2012, which is what the company ships today.

Developed with an initial budget believed to be around $72m, Red Storm’s successors’ sales have gone on to raise billions.

“Red Storm was the system that has allowed Cray to grow to several times the size that we were, and be successful over the years,” Scott said. “It all started with this system.”

As for Sandia, after Red Storm, it was left with a data center built with liquid cooling in mind and with three foot of raised floor space. “Since then we’ve placed several different systems in there. We’re able to do liquid cooling, and air cooling in the same venue,” Martinez said.

“We bought a couple of other air-cooled systems and we built some nice containments over them. We designed our own containments and we deployed them here. We started doing air-side containment before it was really out there.”

Looking back at Red Storm, Martinez told DCD: “It was a great system. Now, we have other systems that we’re working on that I can’t say much about that equal Red Storm in impact at its time.”

This article appeared in the February/March issue of DCD Magazine. Subscribe to the digital and print editions here: