5G, IoT and big data are driving the need for more distributed data centers and high-performance edge computing. Today, networking traffic continues growing faster than compute, and much of that growth is from computer-to-computer communications supporting mission-critical applications. Cloud and data center operators are under pressure to ensure that their networks efficiently deliver high throughput and avoid bottlenecks to deliver optimal application performance. Overcoming this challenge makes networking the most critical piece for improving application performance.

When it comes to virtualization, networking is sometimes perceived as lagging behind the other aspects that comprise the cloud such as compute and storage. The adoption of SDN has brought many benefits to data center environments as they enable the network to match the flexibility and agility of the cloud. However, network virtualization has added to the already accelerating proliferation of virtual machines (VMs) - creating even more code, file, and server bloat - and increasing overall system latency.

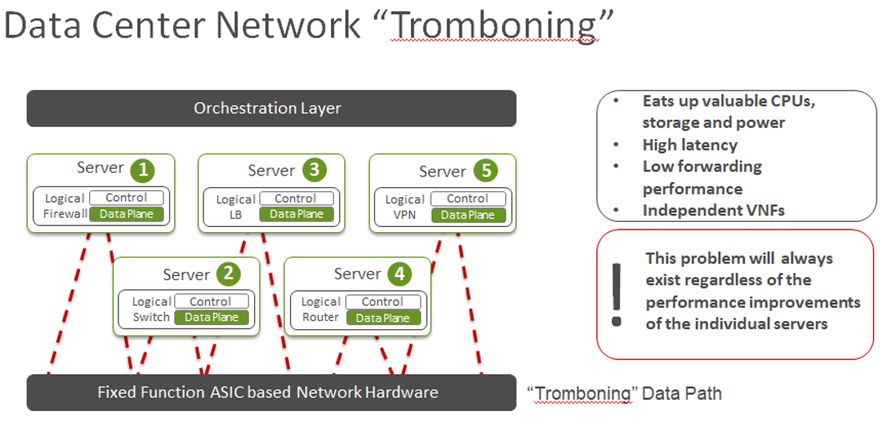

Formerly hardware-based network functions such as routing, switching, gateways, firewall/security, load balancing, and others have been converted into Virtualized Network Functions (VNFs) running in VMs. With VMs now handling a variety of network functions not only has server bloat increased, eating up the data center’s valuable compute and storage resources, but it can introduce extra network latency by creating circuitous pathways through data center architectures. Packets now travel through numerous VMs that may each be housed on different hardware and the data plane path is often seen “tromboning” back and forth through these. In addition to the serpentine network pathway, each VM has its own data plane implementation - sometimes redundant - to propagate this meandering packet journey.

Streamlining the data plane

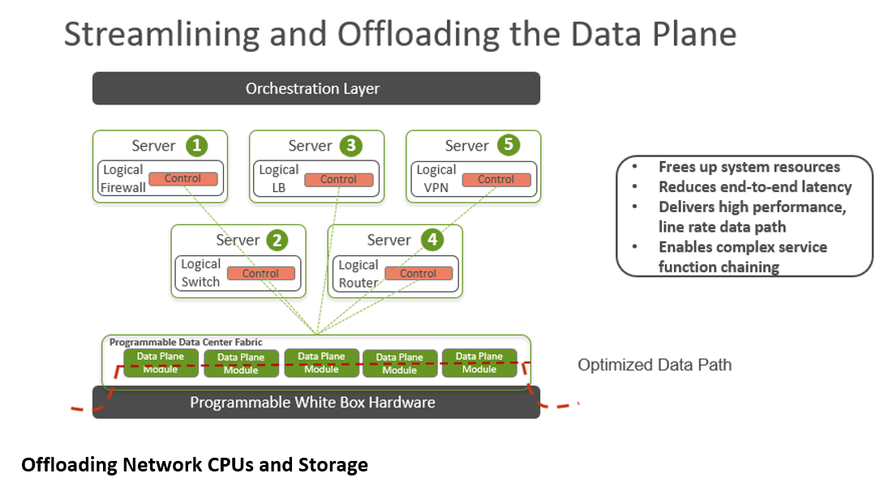

Offloading data plane functions from numerous VMs and collapsing them into a streamlined data plane can dramatically reduce network latency, increase throughput and free up valuable CPU resources to increase data centers’ overall system and application performance. For example, offloading virtual switch functions provides significant data plane acceleration. Generally speaking, the more features that can be collapsed, or integrated, into the data plane fabric the more efficiently the network and overall applications perform. Offloadable features include but are not limited to vRouter, vGateway, and virtual load balancing as well as security/firewall and other networking functions.

For those environments that support it, cloud-native or container network functions (CNFs) offer further efficiencies. The amount of code and resources required to support a VM is typically much greater than a container. In addition to enabling microservices, CNFs deliver a level of agility and portability that cannot be matched by VM-based VNFs.

Offloading network CPUs and storage

While streamlining the data plane can be done via software in existing VM-based data center network environments, offloading the CPUs and storage can be further optimized with some additional changes in hardware. SmartNICs, FPGAs and P4 programmable switches can all be used to offload network-related CPU cycles and data plane coding and functions from servers and storage systems. FPGAs and open source P4 programmable switches deliver the added advantage of programmability and provide significantly more compute power for networking functions.

Programmability provides the flexibility to deliver custom network applications for specific use cases and future-proofs the network investment, allowing it to be flexibly programmed to meet unforeseen needs and new applications. It also prolongs the lifetime of the networking hardware as it can be updated to support newer protocols and features. Leveraging open-source software and programmability allows new network functions to be developed at the rapid pace of software development cycles, without having to wait for years-long silicon hardware upgrades to deliver new features.

Programmability and offloading servers enable further benefits such as the ability to support machine learning that can lead to network automation and, ultimately, AI-based networking capabilities. These can include zero-touch provisioning, self-configuration, self-healing, and self-remediating, self-monitoring and self-optimizing networks. Combining open-source software with the use of open networking white box hardware reduces CapEx, while automation reduces OpEx for data center operators. The programmability of the networking hardware also enables a robust community of solution providers, allowing data center operators to avoid silos of proprietary locked-in solutions.

Data center renaissance

Last fall, IBM cemented the legitimacy of hybrid cloud and the commercial open source business model when it announced a $34 billion deal to acquire Red Hat. IBM president CEO Ginni Rometty proclaimed, “IBM will become the world’s #1 hybrid cloud provider, offering companies the only open cloud solution that will unlock the full value of the cloud for their businesses."

Hybrid cloud is here to stay because enterprises have different technological needs for different applications, whether they are best served on premises, in data centers or public cloud environments.

Network virtualization has taken about the same time to achieve realization as the original conversion of data centers into virtualized cloud environments – a little over a decade. Because SDN and NFV had the advantage of being able to follow the open-source blueprint established by the Linux project, networking has been able to catch up to the speeding tech train that is the cloud.

Today, networking has not only caught up to the cloud but with programmability, automation, ML, and AI capabilities, it has the ability to add value to the stack. Need a fully isolated data center network to test a new game or application? New network slicing capabilities can take care of that. Just added a few hundred or thousand containers to your environment? Self-configuration and zero-touch provisioning can handle that, automatically.

SDN is now capable of fully supporting all the needs of the software-defined data center. Years of work by open source groups and projects are finally culminating in low latency, low cost, automated solutions that work across multiple environments. These have enabled capabilities that used to be available only to hyperscalers (AWS, Azure, GCP, etc.) having vast resources and engineering teams.

Open source democratized the software-defined data center for the rest of us, and streamlining the data path can offer order of magnitude improvements in overall network efficiency. The question is no longer whether your network can rapidly and flexibly deliver the throughput required for mission-critical application performance, but rather, how does your business plan benefit from it?