Microsoft has introduced new hardware architecture into its cloud data centers, hoping to considerably increase network performance - at no cost to customers.

Accelerated Networking offloads some of the SDN functions from CPUs to purpose-built network interface cards (NICs), reportedly helping individual servers reach throughput of 30Gbps.

“By moving much of Azure’s software-defined networking stack off the CPUs and into FPGA-based SmartNICs, compute cycles are reclaimed by end user applications, putting less load on the VM, decreasing jitter and inconsistency in latency,” Gabriel Silva, senior program manager for Azure Networking R&D, said in a blog post.

In silico

Accelerated Networking is enabled by NICs based on field-programmable gate arrays - integrated circuits consisting of logic blocks that can be configured on-the-fly to speed up specific workloads.

The technology has been around since the 80s, but has seen a surge of interest in recent years, with the emergence of new types of resource-intensive tasks like machine learning, bulk video processing and genomics.

FPGA-based NICs are being proposed as the solution to data center networking bottlenecks since they are able to efficiently process bit-intensive, packet-based workloads without the overhead of traditional CPU instruction sets that were designed to serve a variety of purposes.

On the software side, Microsoft’s Accelerated Networking involves Single Root I/O Virtualization (SR-IOV) - an extension to the PCIe bus that allows a device, such as a network adapter, to separate access to its resources among physical and virtual functions.

The technology is now free to use as part of most general purpose and compute-optimized instance sizes on Azure, including D/DSv2, D/DSv3, E/ESv3, F/FS, FSv2, and Ms/Mms. It is supported by both Windows and Linux-based instances.

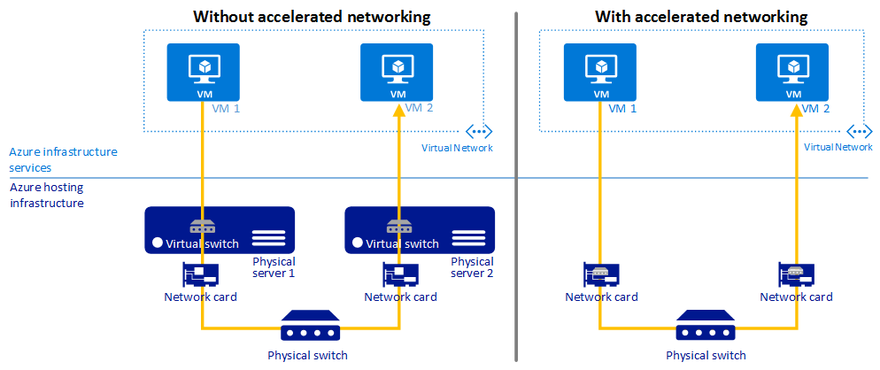

“Without accelerated networking, all networking traffic in and out of the VM must traverse the host and the virtual switch. The virtual switch provides all policy enforcement, such as network security groups, access control lists, isolation, and other network virtualized services to network traffic,” Microsoft employees Jim Dial and Gabriel Silva explained.

“With accelerated networking, network traffic arrives at the VM’s network interface (NIC), and is then forwarded to the VM. All network policies that the virtual switch applies are now offloaded and applied in hardware.”

AWS, the world’s largest public cloud provider, makes its own custom silicon for NICs, supplied by Israel-based chipmaker Annapurna which it acquired for $350m in 2015. However, AWS has bet on application-specific integrated circuits (ASICs) – a different approach that offers potentially lower costs and more customization options over FPGAs, but less flexibility.