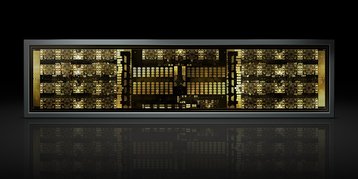

Chipmaker Nvidia has announced a new GPU interconnect fabric, NVSwitch, which allows 16 GPUs to simultaneously communicate.

The on node switch architecture has a total of 18 ports, which provide 50GB/s of bandwidth per port, giving an aggregate of 900GB/s of switching bandwidth.

NVLink’s Switch

“We need bigger GPUs, we need more memory,” Ian Buck, GM of Nvidia’s data center business, said in a prebriefing to DCD at the company’s GPU Technology Conference.

But Volta GPUs currently have six NVLink ports for communication, which allows for eight GPUs to be connected using hybrid cube mesh topology.

“If we want to go more, we’re constrained. So we thought about how we can scale up the number of GPUs in a system. You can connect multiple switches together to cascade them, to scale up. We spared no expense on the design of this to make sure we would never be limited by GPU to GPU communication,” Buck said.

During the keynote, Nvidia CEO Jensen Huang highlighted the switch’s transfer rate: “14,000 movies can be transported across this switch in one second. ‘Schwup,’ like that,” he said.

Huang claimed a 20x performance improvement over PCI-Express, although that depends on the PCIe switch used, and could be closer to a 3x improvement. Separate Nvidia marketing mentions a 10x improvement, as well as a 5x higher bandwidth “than the best PCIe switch.”

Featuring some two billion transistors, the networking ASIC has been in development for the past two years. At the moment, however, NVSwitch is only available in Nvidia’s DGX-2 servers.

Buck told DCD: “There’s nothing to announce on bringing the NVSwitch to the other guys yet, but it is Nvidia’s goal - and my goal - to make access to our technology through all of our channels.”